Holographic Media

Media is no longer linear. Legacy outlets fade into noise, and communities have become filters through which all platforms are accessed.

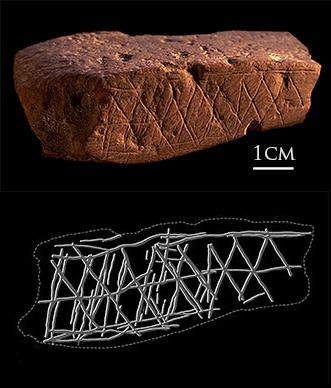

Around 73,000 years ago, early humans in what is now Blombos, South Africa, dipped stones in scarlet ochre and carved into cavernous walls ladder-shaped figures, dots, and handprints to depict their world. The representational image is mimetic—it imitates nature. For Aristotle, the image is a simulation that engenders an emotional response in the viewer as they see themselves in what is being depicted, fictional or otherwise. For Kant, its aesthetic quality can be determined by its attempt at reflecting “natural beauty” or “the appearance of nature.” These human-made images, where the stroke and intent are legible, express the relationship between humans and their environments, their gods, or their myths—between humans and the world.

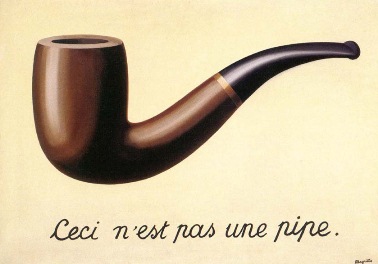

Such images have not always had a good reputation. Sensing danger, many cultures advocated for iconoclasm, the belief that images should be destroyed. Islam notoriously prohibits depictions of the prophet, and Hadith 110 in Book 63 reads “Angels would not enter a house where there are pictures.” Early Christians held similar ideas. Canon 36 from the Council of Elvira in 306 AD reads: “Pictures are not to be placed in churches, so that they do not become objects of worship and adoration.” Rene Magritte’s painting The Treachery of Images (1928–29) depicts a pipe with the inscription Ceci n’est pas une pipe (“This is not a pipe”). When asked about the sentence, Magritte replied: “Could you stuff my pipe? No, it’s just a representation, is it not?”

The technical image turns the world into stocks of future photographic moments to be captured.

Magritte’s intuition that representations are mistaken for the objects themselves, that images usurp reality, was shared by philosopher Vilém Flusser. In his 1983 essay “Towards a Philosophy of Photography,” Flusser wages one of the best modern criticisms of images. He claims that after the act of image production, the connection between the image and its maker evanesces. “Essentially, this is a question of amnesia. Human beings forget they created the images in order to orientate themselves in the world,” writes Flusser. “Imagination has turned into hallucination.” He believes we misconstrue images, thinking they are “screens” (or representations of the world) when they are “maps” (approximations), and this misattribution leads to a lack of criticality. This amnesia allows images to become objects of worship or adoration in the religious context.

Images produced by what Flusser calls apparatuses are the most susceptible to being mistaken for screens. The photograph is the product of a technological process which captures rays of light onto a photosensitive material, a purely chemical and physical process. As the image production process becomes technical, the technical image comes to seem natural; we see it as something that has always been there. We project a scientific objectivity onto it. The technical image’s claim is pure mimesis. It has achieved its goal of “perfect” representation. It represents the world and thus furthers the instrumentalization of humans. It turns the world into stocks of future photographic moments to be captured. We live with the constant desire to capture every detail of life through our cameras. The camera behooves us to collect these moments, to accumulate these stocks. This tool begins as an extension of our desire to imitate “the appearance of nature,” but the relationship reverses, we become its extension, addicted to images. We become the camera’s instrument, the takers of images. The parasitic goal of images is to proliferate, and we have become their servile hosts.

A MidJourney prompt: “/Imagine: A handsome male entrepreneur stands confidently in a tailored suit before a stunning business skyline of Toronto. His warm smile reflects the vibrant mix of modern and historic architecture behind him. He is staring right at the camera with a slight smile – a smile with no teeth. The rising sun casts a golden glow on the scene –ar 3:1”

The output: A polished image. The male wears the hair du jour. Shadows hug his right cheekbone. He looks real, really real. His suit is sharp with sloping shoulders. He wears a skinny tie, and his left pocket holds a matching mouchoir. The skyline is blurry with the CN tower, its shape visible afar. It looks real, really real, as if taken by an apparatus.

The merging of the synthetic and the artificial reveals the ultimate goal of the image.

Everything about the image is meant to exude credibility. MidJourney aims to make images that respond to prompts provided by users. It receives a prompt, passes it through a series of layers of artificial neurons, and puts out an image that satisfies the prompter. If it fails to satisfy, it tries again. This system is called a neural network. To this day, this technology’s inner workings are not fully understood even by its makers. The input is translated into weights and activation links between the neurons. The magnitude of the different connections makes the interpretation of neural networks extremely difficult, if not completely impossible.

Benjamin Bratton, in his 2021 lecture “The Synthetic and the Real,” cites the distinction made by AI researcher Herbert Simon between “artificial” and “synthetic.” Artificial implies deception, like zirconium in the shape of a diamond. A synthetic substance, on the other hand, is deliberately created of the same matter as that which it imitates but composed differently: a lab-grown diamond. Is an image made by MidJourney artificial or synthetic? I posit that the AI image is both. It seeks to be credible enough, to deceive. Remember: the Turing test is based not on intelligence itself but on the deception of a human agent. But the AI image is also an image, just composed in a new way. It merges both gestures to become synficial, a deception that becomes what it imitates.

The merging of the synthetic and the artificial reveals the ultimate goal of the image. What does the image want but to no longer be questioned—to finally break the screen and be reality itself? Images, like information, want to be free. The image wants to emancipate itself from its author. The image wants to be the author.

What does the image want but to no longer be questioned—to finally break the screen and be reality itself?

During his keynote at last year’s FWB Fest, David Rudnick noted that a new generation of image viewers will grow up in a world where most of the images are AI-generated. Because of their upbringing, they will find the value of an image not in its author but in the image itself as a standalone object. We can map out this moment of pictorial singularity. The 2022 research paper “Will we run out of data?” estimates the number of images currently on the internet between 8 and 23 trillion, with a yearly growth rate of 8 percent. If in 2022 AI models generated 10 million images per day with a year-on-year growth rate of 50 percent, the flippening predicted by Rudnick should happen in about twenty-two years.

The pictorial flippening will also represent the point at which the images in the datasets (unless selected otherwise) will be in majority from AI-generated images, thus making the models recursive, mirrors of their own creations. Networks will create images to create more images. The artificial will no longer try to mimic the human-made but this new amalgam of network-made and human-made. The blurring will be complete, and the modern world will be precipitated into a permanent state of hyperreality, where images will no longer be tethered to a human maker and images will be made for and by machines. These images will depict a world no longer centered on humans. And this world is the true end point of the image. It’s why they are treacherous.

Ruby Justice Thelot is a writer and designer based in New York.

Thanks to Ganbrood for granting permission to illustrate this article with his work.